Recently, I met Varun, a Delivery Manager in a software services organization. We were re-connecting after a gap of a few years. Back then, Varun was a promising young techie and wannabe project manager. As an external consultant and coach, I had mentored him for higher responsibilities in software project management. We met over coffee to catch up. It was gratifying to see Varun in the role he had once aspired to.

While Varun was generally upbeat about his work and life, I could sense that there was something troubling him. He was not quite the bubbly person of the past. With some probing, Varun talked about his current work situation. His CEO was driving AI adoption across the organization, including project management. Delivery Managers like Varun now had specific AI adoption targets. Varun’s goal was to promote AI adoption in project risk management. Risk management was one of Varun’s strengths, and he embraced the challenge.

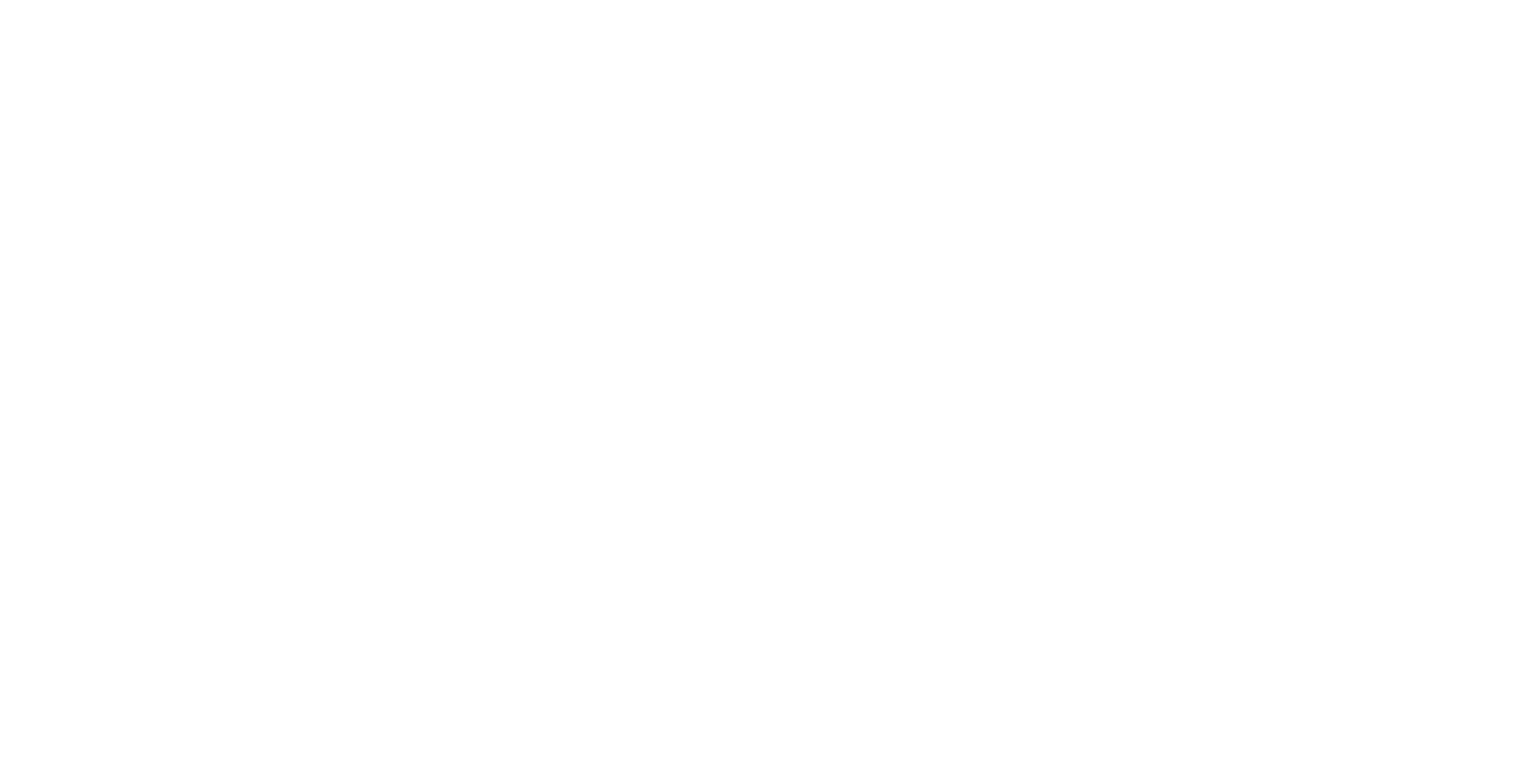

Varun and his team designed an AI-driven approach for near-continuous risk monitoring, replacing periodic manual reviews. Varun outlined a pragmatic high-level architecture comprising:

- Data Sources & Ingestion

- Risk Intelligence (using AI/ML and LLMs)

- Risk Repository

- Workflow Engine

- Human-in-the-Loop Oversight

- Dashboards & Reporting

As Varun and his project teams implemented the architecture, he realized that there were major challenges with data quality particularly with information on tickets in Jira. Jira hygiene varied widely across Varun’s projects — barely adequate overall and unacceptable in many. Varun was in a bind; cleaning up Jira data would delay AI adoption; ignoring it would cripple the initiative. Garbage in would still result in garbage out. What looked like an AI challenge was, in reality, an organizational behaviour challenge around data discipline.

Varun asked for my help. I was glad to re-enter the conversation — this time not as a mentor, but as a thinking partner.

Varun and I set aside an afternoon, did some brainstorming and dug into experience & research on data quality issues and ways to address them. Our discussion converged into a few practical insights:

- Poor data quality is common. Tools like Jira are often used for compliance tracking rather than learning. When teams see little value for themselves, data hygiene suffers

- AI adoption and data quality are tightly linked: better data yields better AI outcomes. Rather than aiming for full “continuous risk monitoring” from day one, it is wiser to start with modest sub-goals — for example, predicting delays in critical Jira tickets

- However, even modest sub-goals like the one above require minimal data discipline – key Jira fields, clear descriptions, accurate status changes, and realistic estimates

- Even with imperfect data, early AI pilots can surface insights previously unseen – such as the correlation between poor ticket descriptions and the rework magnitude. Project teams and other stakeholders begin to see value emerging from AI adoption even in the short term

- As AI begins to deliver value, it exposes data quality gaps, motivating teams to improve them — a reinforcing loop emerged

- Value → Data Discipline → Better Data Quality → Better AI → More Value

The session reassured Varun that there were indeed ways to address the data quality challenge and move the AI adoption forward. He then scheduled another session with a few select project teams to develop a short team plan based on the notes above. I was happy to facilitate it. The session was highly productive and focused on:

- Auditing a sample set of Jira tickets

- Measuring missing field percentages

- Standardizing five core risk-related fields

- Enforcing linkage between Risk, Epic and Defect

- Sanitizing three months of historical data

- Building a delay prediction model for critical tickets

- Publishing insights

By the end of the session, the teams were confident they could achieve this within three months. Based on this, AI adoption in risk management could then spread to the rest of Varun’s portfolio.

Poor data quality is a real barrier to AI adoption. But waiting for perfect data is a mistake. By starting with pragmatic sub-goals, organizations can generate early value, improve data discipline, and gradually build trustworthy risk intelligence. AI does not merely automate risk management — it exposes and strengthens the system behind it.

One Response

Valuable information.