What building two Proofs of Concept (PoCs) taught me

I didn’t come back to programming with a plan. I came back with a problem and a lot of uncertainty.

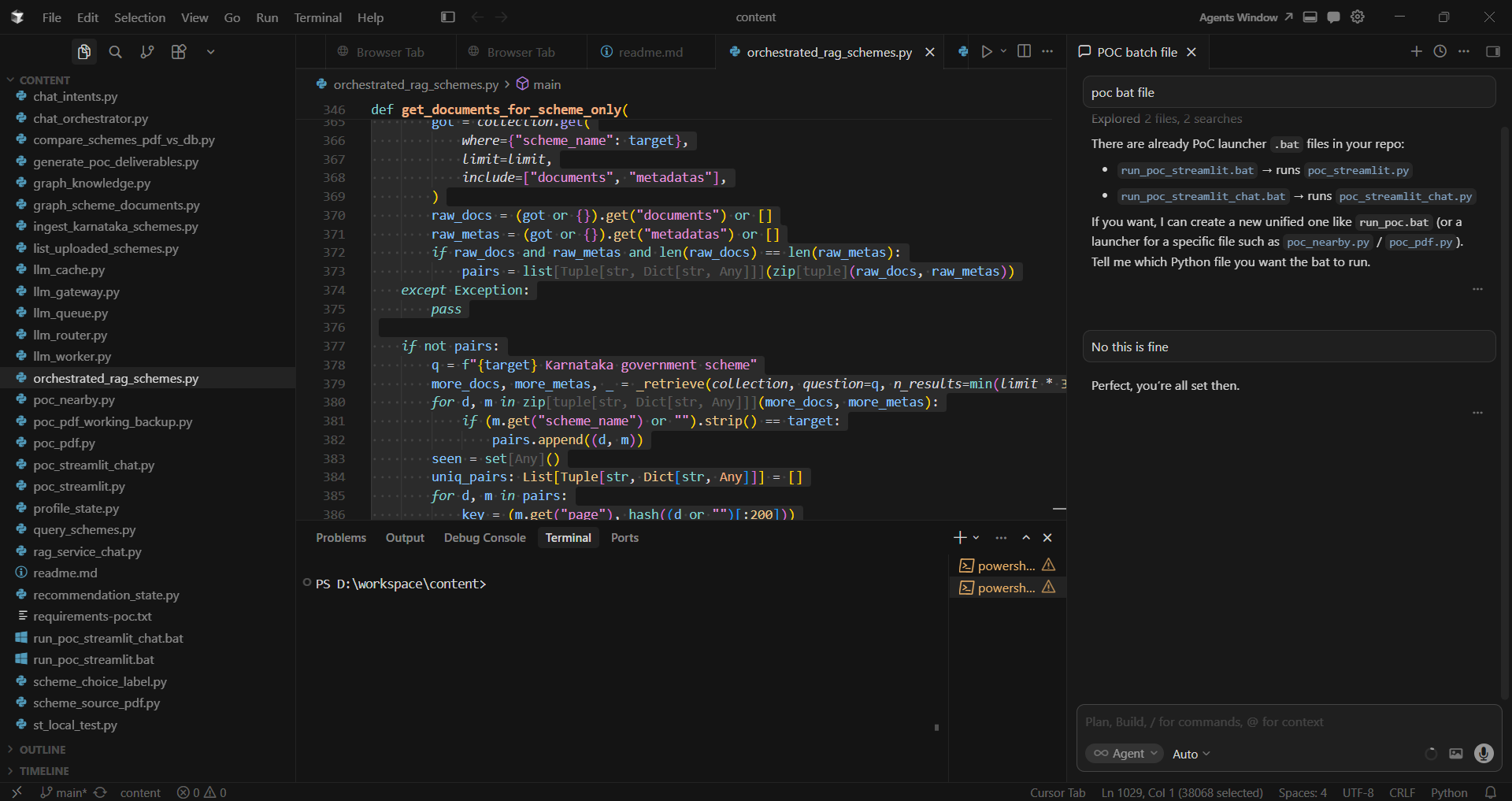

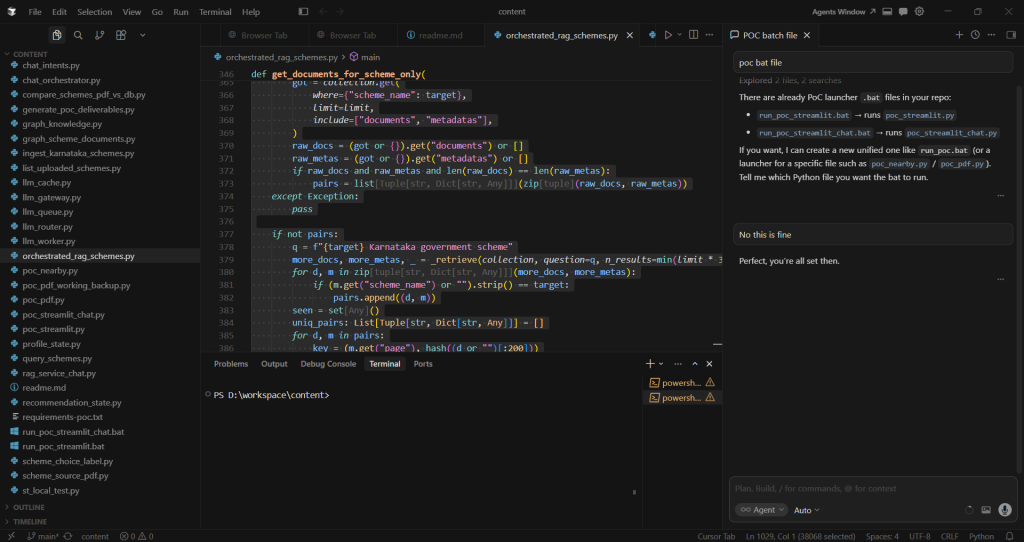

When I started these two proof-of-concepts, I had no real knowledge of Python, had never used Cursor, and didn’t understand terms like embeddings, vector databases, Chroma DB, or RAG. Even GitHub baselines and branching felt like “things real developers do.”

But two PoCs later, I had working software I could demo, tagged baselines I could return to, and a much clearer understanding of what software development looks like today—especially when AI systems are involved.

There is real magic in using Cursor. Design, coding, and debugging start flowing the moment you describe what you want.

Big thanks to Raja Subramanian Kamakshi Iyer for the 4-hour jumpstart that kicked this all off.

From PDFs to a searchable knowledge base (PoC 1)

The first PoC was simple to describe and hard to execute well: ingest government scheme PDFs into a local vector database, so the system could later answer questions with citations.

This is where I met my first big shift in thinking:

- Search isn’t only keyword matching anymore.

- Meaning-based retrieval works because text can be represented as embeddings.

At the start, “embeddings” sounded like magic. But I learned them the practical way – by following the pipeline end-to-end: PDF → text extraction → chunking → embedding → storing vectors + metadata → querying by similarity.

The real lessons weren’t glamorous, but they were real:

- If the script needs arguments, your run button might not provide them.

- If a terminal shows `>>`, you might be stuck in multi-line input – not ‘hung.’

- If you can’t tell whether something is running, add progress logs.

I also learned what “idempotent” means in practice: making ingestion safe to rerun without duplicating data. That single concept reduces fear and increases speed.

A chat-first experience without breaking the baseline (PoC 2)

The second PoC was a step-change: move from a rigid ‘form-first’ flow to a chat-first experience where the system learns a citizen profile gradually and recommends schemes accordingly.

My constraint was non-negotiable: the original PoC had to keep working exactly as-is. That pushed me toward a clean engineering practice: preserve a baseline and build the new path alongside it.

We had a surprisingly useful debate here.

My instinct was: create a completely separate set of files so I don’t get confused. The counterpoint was: if you duplicate code, every bug fix must be applied twice. That’s how projects slowly break.

The compromise was the right one: new entrypoint, new modules, but shared core logic where possible. Separate doesn’t have to mean duplicated.

The “scheme 3” bug: why AI products fail in unexpected ways

One of the most instructive bugs wasn’t a crash. It was inconsistency. The assistant showed a numbered list of schemes, but when I selected ‘scheme 3,’ it claimed there was no scheme 3.

Fixing it taught me an AI-specific lesson: many failures are alignment failures – alignment between what the user sees and what the system’s internal state believes is true (especially when metadata labels are generic or partially wrong).

The Gemini model-name moment: I corrected the Cursor

My favorite moment was small but meaningful. We were fighting model-name errors with Gemini. Numbered model IDs weren’t working in my environment. But I remembered an alias that had worked earlier: gemini-flash-latest. I suggested it; and it worked.

That moment mattered because I wasn’t just ‘being helped.’ I was doing what developers do: observe reality, try a hypothesis, and feed the learning back into the system.

Baselines, GitHub, and collaboration

Eventually, the PoCs became more than experiments. They became shareable snapshots: Baseline 1 and Baseline 2. Tagging baselines taught me that a baseline isn’t a folder; it’s a point in history you can always return to.

I also learned how to share code safely: colleagues can clone and work locally, but they can’t change your repository unless you give them write access. Publishing code (even publicly) doesn’t mean losing control. It means choosing how collaboration happens.

What I learned about “rediscovering” development

If I had to summarize the rebirth in a few lines:

- It isn’t about memorizing syntax. It’s about building confidence through small working loops.

- It’s about clarity: logs, reproducibility, baselines, and safe reruns.

- It’s about modern reality: you debug APIs, quotas, model names, and environments—not just code.

And yes, there is real magic in using Cursor. Design, coding, and debugging start flowing the moment you describe what you want.

You still think, decide, and validate. But the distance between idea and implementation becomes dramatically shorter. Sometimes it feels like all you have to do is say the word.

The surprising part is that even when everything is new, not everything is lost. The ability to break down problems, insist on safety, and keep users in mind—that comes back quickly.

Closing

If you’re someone who stepped away from programming and is considering coming back: you don’t need to “know everything.” You need one problem worth solving and the willingness to iterate in public—with your own past self as the first audience.